SleepVision: Local AI Sleep Architecture

1 April 2025

I have always been curious about my sleep habits. Specifically, sleep talking and minor disturbances that happen while I am completely unconscious. I have been known to climb out on the roof in my sleep as a teenager and my house mates have recently noticed a few too many strange encounters once the sun goes down. So I thought I might investigate...

There is no shortage of "smart" sleep trackers on the market, but they all share the same critical flaw: privacy. I absolutely refuse to put an internet-connected camera and microphone in my bedroom that streams eight hours of intimate audio and video to a corporate cloud server for "analysis."

I wanted hard data, and I wanted it strictly on my own hardware so I have ownership of it and can explore new and innovative ways to analyse it.

So, I engineered SleepVision. It is a 100% local, Raspberry Pi-based orchestration system that captures continuous high-definition video and audio throughout the night, segments it, and uses traditional image/audio processing techniques and local AI models to hunt for anomalies while I sleep.

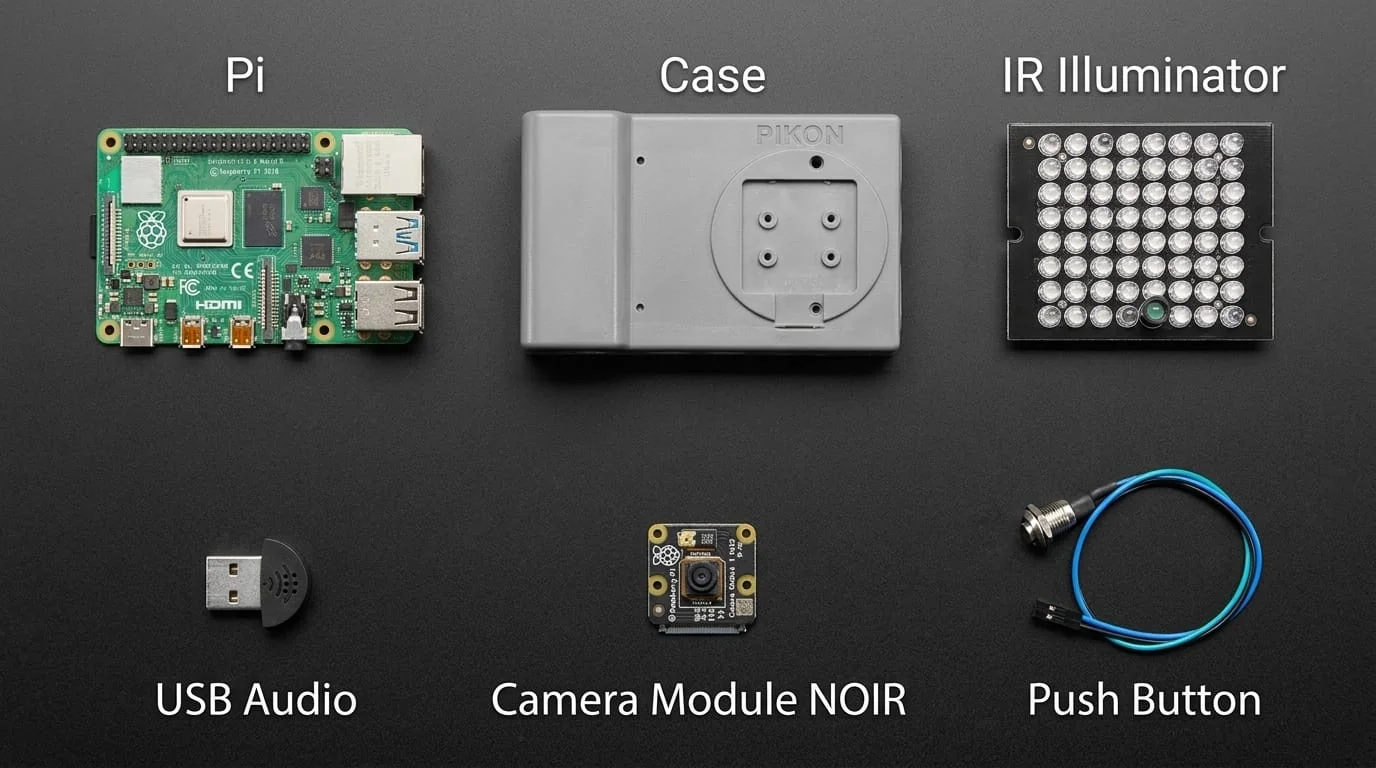

🛠️ The Hardware Architecture

- The Brains: Raspberry Pi 5 (4GB RAM). I am currently offloading the heavy AI image/audio processing workloads to my main PC.

- The Eyes: Raspberry Pi Camera Module 3 NOIR (Wide Angle) for pitch-black infrared visibility. Combined with a 48-LED board to illuminate the room with invisible 940nm infrared light.

- The Ears: A USB PCM2902 audio capture card.

- The Storage: A 500GB+ USB 3.0 SSD (because an SD card would melt under the constant write loads of 1080p video).

- The Control: A physical 12mm momentary push button wired to GPIO 21 on the Pi. I literally just press the button when I go to sleep to start the orchestration, and press it again when I wake up.

- The Housing: A resin 3D-printed housing provides protection and a rigid surface to mount the custom-machined articulating arm.

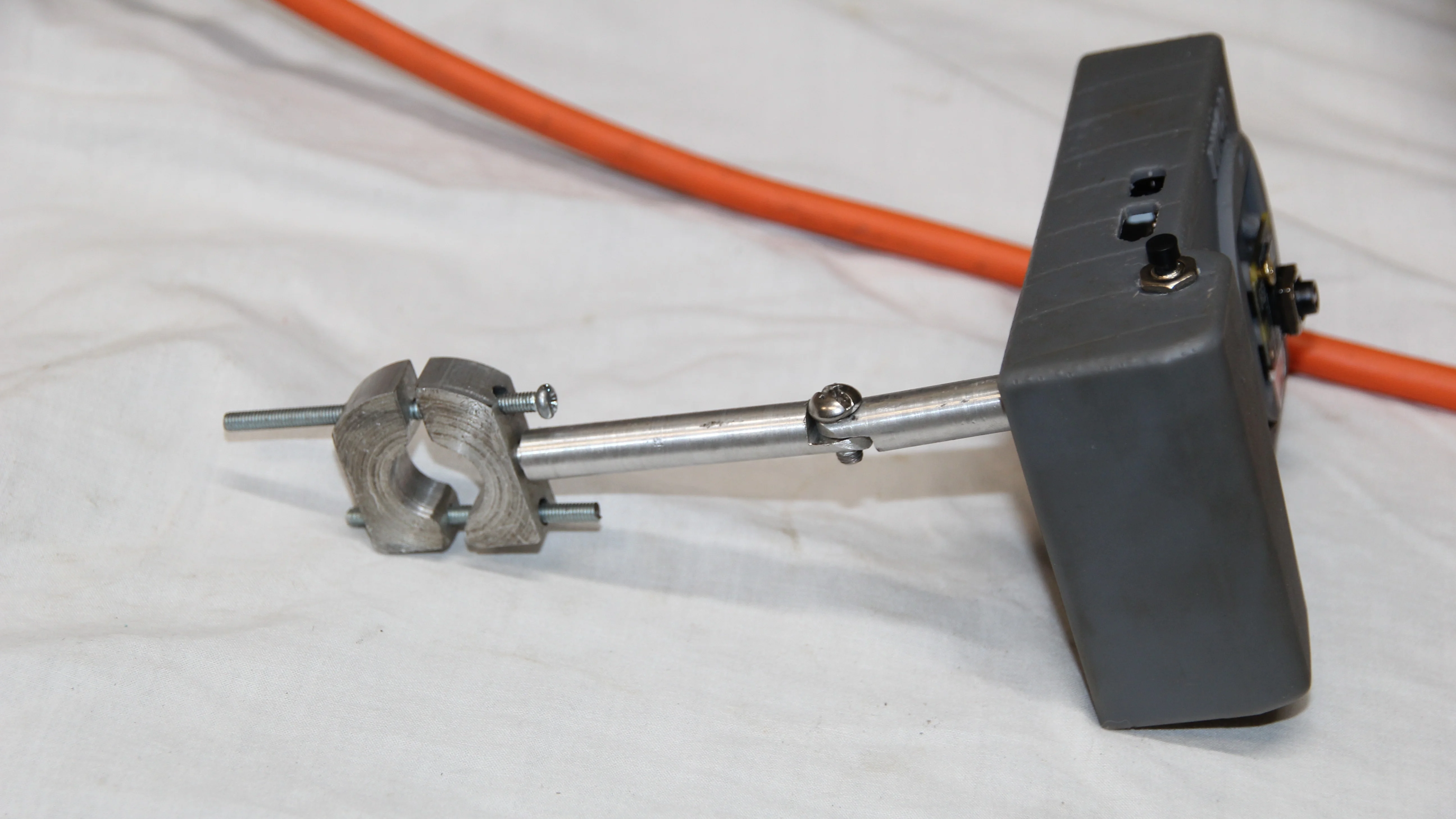

Custom Machined Articulating Arm

To get the perfect overhead angle of the bed, a cheap plastic Amazon tripod wasn't going to cut it. I jumped on my lathe and custom-machined a fully articulating metal mounting arm from scratch.

It features a robust custom clamp designed to bite securely onto my curtain rod, and a friction-hinge system to dial in the exact camera pitch over the bed. Building this from solid metal ensures the camera never sags or drifts out of frame during the night.

Turning components on the Lathe

Machining the custom articulating arm and clamping mechanism.

The finished articulating arm bolted to the 3D-printed resin housing.

The final rig deployed, securely clamped to the curtain rod for the perfect overhead angle.

⚙️ The Software Pipeline (How it Works)

Capturing 8 hours of continuous, synced A/V on a Raspberry Pi without dropping frames or melting the CPU is an exercise in hardcore multi-threading and memory management.

I broke the system into two distinct phases:

Phase 1: Nightly Capture (The Edge)

When I hit the record button, the Pi runs my custom Python VideoRecorder orchestrator:

- Variable Frame Rate (VFR) Capture: To prevent CPU bottlenecking, the

FrameGrabberthread captures images as fast as it can, while a separateSaveWorkerthread handles the SSD disk I/O. - Precise Timestamping: Instead of relying on a rigid 30 FPS, the system logs the exact Unix Epoch timestamp for every single frame into a text file.

- Auto-Segmentation: Every 3 minutes, the system cuts the recording and spawns a background multiprocessing task to save the raw audio.

Phase 2: Post-Processing (The AI Brain)

When I wake up, the Pi (or my massive local desktop PC) kicks off the heavy lifting:

- The Muxer: A custom

mux_all.pyscript uses FFmpeg and the Unix timestamps to mathematically reconstruct the video segments with perfect audio-sync, burning a visible timecode into the frame. - Voice Activity Detection (VAD): The

audio_process.pypipeline runs the audio through noise reduction and passes it to Silero VAD. The AI scans the audio to detect human speech against background fan noise. - Transcription: If speech is detected, the audio chunk is isolated and fed into a local instance of OpenAI Whisper. Whisper transcribes exactly what I said in my sleep and exports the data to a CSV with frame-accurate timestamps.

debugging content generated by my audio processing pipeline, showing the noise reduction attempts and assisted in precise isolation of a sleep-talking event against ambient room noise.

📹 The Results

The system works flawlessly. Instead of scrubbing through 8 hours of footage, I wake up to a simple CSV file telling me exactly what time I spoke, what I said, and linking me to the exact 5-second video clip.

🚀 The Future Roadmap

This project is very much active. Capturing the baseline data is just Phase 1. Moving forward, I am planning to:

- Integrate Garmin Data: Cross-reference my Garmin watch's stress/heart rate metrics with the video timestamps to see physical reactions to sleep disturbances.

- Computer Vision Movement Tracking: Use OpenCV to mathematically quantify "tossing and turning" without needing wearable accelerometers.

- Agentic Sleep Optimization: Build a local OpenClaw AI agent that reviews my SleepVision CSVs and Garmin metrics daily, suggesting optimal sleep/wake scheduling based on real historical data.

Privacy doesn't mean you can't have advanced AI analytics. It just means you have to build the infrastructure yourself.